Science

Get the latest breaking news about the latest discoveries in science, health, the environment, and beyond. Most recent discoveries and advancements.

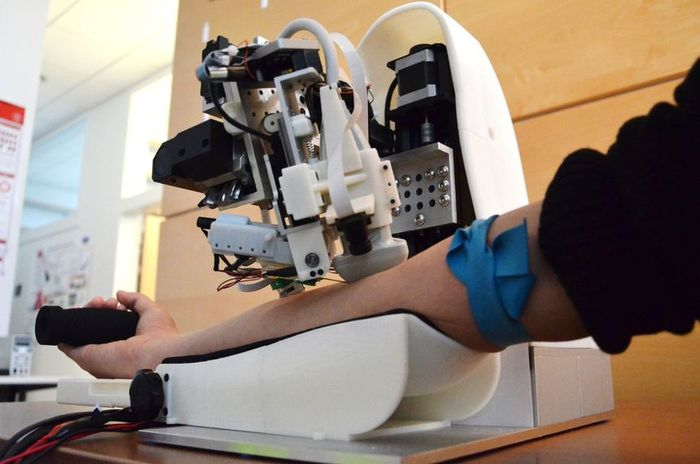

Veebot robot capable to take blood from a vein more safely than a human

The blood capture on the analysis is the most widespread medical procedure. The study captures one of the most vital issues in medicine. About 20%…

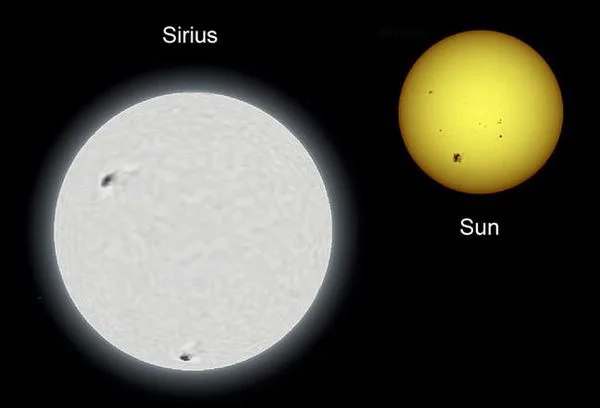

Top 10 Largest Stars In The Universe

Stars are massive plasma spheres that are held together by gravity. As you are probably…

AMADEE-24 Mars Mission Releases Images of Spacesuit Testing in Armenia

The Austrian Space Forum (OeWF) has released the initial photos from the trials of OeWF…

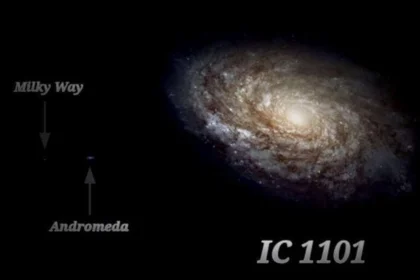

The Largest Galaxy in the Universe

It is estimated that the Universe has roughly 200 billion galaxies, and in this article,…

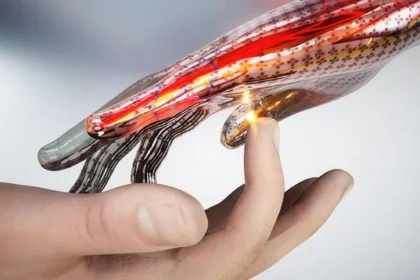

Physicists created super-sensitive electronic skin

The American physicists created the super-sensitive electronic skin, not conceding in durability and flexibility to its biological analog…

Lasted Science

2024 Astronomical Events of Celestial Calendar

The astronomical calendar for 2024 is rich in vivid space events. During…

Seconds in Space: How Long You Could Survive Without a Spacesuit?

We live in a beautiful place, where everything is colorful and bright.…

Top 10 Potentially Habitable Exoplanets

A planet outside our solar system that revolves around a star is…

The Petrifying Well of Knaresborough Makes the Objects Stone-Like Appearance

The Petrifying Well of Knaresborough is a well located in the English…

Hubble telescope measured the quantity of interstellar dust in the nearest galaxies

Astrophysics has learned to estimate the quantity of interstellar dust in the…

Largest Black Hole in the Universe: Supermassive black hole list

A black hole is an area of space where the gravity field…

Top 10 Largest Cities in the World by Area

With a population of about 8 billion, the world's cities are home…

The Largest Galaxy in the Universe

It is estimated that the Universe has roughly 200 billion galaxies, and…

AMADEE-24 Mars Mission Releases Images of Spacesuit Testing in Armenia

The Austrian Space Forum (OeWF) has released the initial photos from the…

New Exoplanet 55 Cancri f

Astronomers have discovered that one of the planets in the binary star…